Revolutionary 5G: Transforming Global Connectivity

Revolutionary 5G: Transforming Global Connectivity

Related Articles: Revolutionary 5G: Transforming Global Connectivity

- Revolutionary Leap: 5 Crucial Steps For Autonomous Car Domination

- Transformative Wearable Tech

- Amazing Breakthroughs: 5 Revolutionary AI Innovations Reshaping Our World

- 7 Amazing Smart Cities Revolutionizing Urban Life

- Revolutionary 5G’s Impact: The Exploding Power Of Edge Computing

Introduction

With great pleasure, we will explore the intriguing topic related to Revolutionary 5G: Transforming Global Connectivity. Let’s weave interesting information and offer fresh perspectives to the readers.

Table of Content

Revolutionary 5G: Transforming Global Connectivity

The rollout of 5G technology represents a monumental leap forward in global connectivity, promising to revolutionize how we live, work, and interact with the world. This transformative technology boasts significantly faster speeds, lower latency, and greater capacity than its predecessors, paving the way for a plethora of new applications and services across diverse sectors. However, the impact of 5G extends far beyond simply faster downloads; it’s reshaping global infrastructure, driving economic growth, and presenting both unprecedented opportunities and considerable challenges.

The Speed and Capacity Revolution:

The most immediately noticeable impact of 5G is its dramatic increase in speed. While 4G networks offered speeds adequate for many applications, 5G delivers speeds up to 100 times faster, enabling seamless streaming of high-definition video, near-instantaneous downloads, and lag-free online gaming. This enhanced speed is not merely a convenience; it’s a fundamental shift that unlocks entirely new possibilities.

The increased capacity of 5G networks is equally significant. The ability to connect significantly more devices simultaneously without compromising performance is crucial in our increasingly interconnected world. This is especially relevant in the context of the Internet of Things (IoT), where billions of devices – from smart appliances to autonomous vehicles – require reliable and high-bandwidth connectivity. The sheer volume of data generated by these devices necessitates a network infrastructure capable of handling the immense load, and 5G is uniquely positioned to meet this challenge.

Transforming Industries:

The impact of 5G extends far beyond individual consumers; it is poised to transform entire industries. Here are some key examples:

-

Healthcare: 5G’s low latency and high bandwidth are crucial for enabling remote surgery, telemedicine, and real-time monitoring of patients’ vital signs. This has the potential to revolutionize healthcare delivery, especially in remote or underserved areas. Imagine a surgeon in a major city performing a complex operation on a patient hundreds of miles away, guided by real-time, high-resolution images transmitted via 5G. This is no longer science fiction, but a rapidly approaching reality. Moreover, the capacity for remote monitoring allows for proactive intervention, potentially preventing serious health crises.

-

Manufacturing: 5G is enabling the development of smart factories, where robots and machines communicate seamlessly, optimizing production processes and increasing efficiency. Predictive maintenance, enabled by real-time data analysis, minimizes downtime and reduces costs. The integration of 5G into industrial control systems promises to significantly enhance productivity and improve safety within manufacturing environments. Automated guided vehicles (AGVs) and collaborative robots (cobots) can operate more effectively with the speed and reliability of 5G, leading to leaner and more responsive production lines.

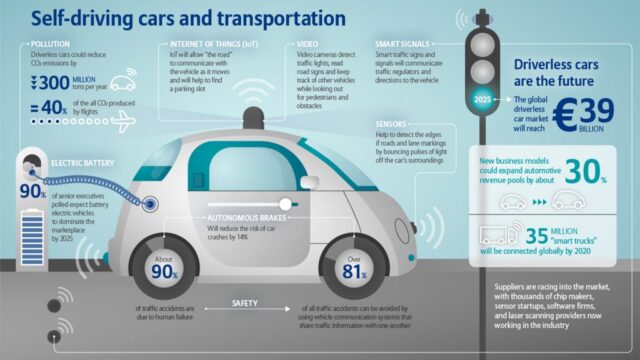

Transportation: Autonomous vehicles rely heavily on 5G’s low latency to communicate with each other and with infrastructure. The ability to react instantly to changing conditions is critical for the safe and efficient operation of self-driving cars, trucks, and other autonomous vehicles. Moreover, 5G is essential for managing the complex communication networks required for smart traffic management systems, optimizing traffic flow and reducing congestion. This leads to reduced travel times, lower fuel consumption, and a decrease in accidents. Furthermore, the development of high-speed rail systems and other forms of public transport can be greatly enhanced by the reliable connectivity 5G provides.

-

Agriculture: Precision agriculture, utilizing sensors and data analytics to optimize crop yields, is greatly enhanced by 5G connectivity. Farmers can monitor soil conditions, weather patterns, and crop health in real-time, allowing for more informed decision-making and increased efficiency. Drones equipped with high-resolution cameras and sensors can collect vast amounts of data, which is then analyzed using AI and machine learning algorithms to optimize irrigation, fertilization, and pest control. This leads to increased yields, reduced resource consumption, and a more sustainable agricultural sector.

-

Energy: Smart grids, which utilize advanced sensors and data analytics to optimize energy distribution and consumption, depend heavily on 5G’s capacity and reliability. The ability to monitor energy usage in real-time allows for more efficient allocation of resources and reduces energy waste. Furthermore, 5G is crucial for the integration of renewable energy sources, such as solar and wind power, into the grid. The real-time data exchange between renewable energy sources and the grid allows for better management of fluctuating power generation and increased stability of the energy supply.

Challenges and Considerations:

Despite its immense potential, the widespread adoption of 5G faces significant challenges:

Infrastructure Investment: Building a comprehensive 5G network requires substantial investment in infrastructure, including new cell towers, antennas, and other equipment. This can be particularly challenging in developing countries with limited resources. The cost of deployment and the need for widespread coverage pose a significant hurdle to the global adoption of 5G.

-

Spectrum Allocation: The availability of suitable radio frequencies is crucial for the successful deployment of 5G. Governments worldwide need to carefully allocate spectrum to ensure efficient use and avoid interference. The process of spectrum allocation can be complex and politically charged, potentially delaying the rollout of 5G in some regions.

-

Security Concerns: As with any new technology, 5G networks are vulnerable to cyberattacks. Robust security measures are essential to protect against unauthorized access and data breaches. The interconnected nature of 5G networks means that a security breach in one area could have far-reaching consequences. Ensuring the security and privacy of data transmitted over 5G networks is paramount.

-

Digital Divide: The benefits of 5G are not evenly distributed. Access to 5G technology may be limited in rural or underserved areas, exacerbating the existing digital divide. Bridging this gap requires targeted investment and policies to ensure that everyone has access to the benefits of this transformative technology. This necessitates not only infrastructure investment but also digital literacy programs and affordable access solutions for those in marginalized communities.

-

Health Concerns: Concerns about the potential health effects of 5G radiation have been raised by some groups. While scientific evidence to date suggests that the levels of radiation emitted by 5G networks are within safe limits, addressing these concerns and ensuring transparency is crucial for public acceptance. Open communication and independent research are necessary to alleviate public anxieties and promote trust in the technology.

Conclusion:

5G technology is undeniably transforming global connectivity, ushering in an era of unprecedented speed, capacity, and innovation. Its impact spans numerous sectors, promising to revolutionize healthcare, manufacturing, transportation, agriculture, and energy. However, realizing the full potential of 5G requires addressing significant challenges related to infrastructure investment, spectrum allocation, security, and the digital divide. Overcoming these hurdles is crucial to ensuring that the benefits of this transformative technology are shared by all, leading to a more connected, efficient, and prosperous future for the entire world. The successful deployment and integration of 5G will not only shape the technological landscape for years to come, but also significantly influence economic growth, social progress, and global competitiveness. Addressing the challenges proactively and collaboratively is key to unlocking the true revolutionary power of 5G.

Closure

Thus, we hope this article has provided valuable insights into Revolutionary 5G: Transforming Global Connectivity. We thank you for taking the time to read this article. See you in our next article!

google.com